VARIETIES OF EXTERNALISM

2nd XSCAPE Workshop

April 10th 2026

Gallery Room

Level 3 of Bramber House University of Sussex

SCHEDULE

8:15

Registration, coffee, tea, and mingling | Outside the Gallery Room, Bramber House Level 3

8.45 - 9.45

Some XSCAPE Routes

Andy Clark and Laura Desiree di Paolo

9.45 - 10.45

Miriam Haidle

10.45 - 11.45

Robert William Clowes

11.45 - 12.00

Break

12.00 - 13.00

Mahault Albarracin

13.00 - 14.00

Sandwich Lunch (provided)

14.00 - 15.00

Joel Krueger

15.00 - 16.00

Lucy Osler

16.00 - 17.00

Entropy Panel

17.00 - 17.15

Break

17.15 - 18.15

John Sutton

18.15 - 19.15

Drink & Posters

20.00 >

Conference Dinner*

*Wahaca, 160 - 161 North St, Brighton and Hove, Brighton BN1 1EZ.

The dinner is free for Speakers and Chairs

Others are welcome but the number of places is limited

For availabilities please contact:

Ben White: benjamin.white@psl.eu

or

Axel Constant: axel.constant.pruvost@gmail.com

THEME

Debates over cognitive externalism are typically framed around a contrast between two positions. Content externalism holds that the contents of our mental states are constitutively dependent on features of the external world, for example, that my belief about water depends on water being H₂O. Vehicle externalism, by contrast, maintains that the physical vehicles realising cognitive processes can extend beyond the biological organism, as when notebooks or technologies function as parts of memory and reasoning. This framing risks obscuring both the diversity of externalising practices and the depth of disagreement they generate. Recent work, particularly on vehicle externalism, has expanded far beyond canonical cases of memory and problem solving to encompass affective regulation, social cognition, narrative identity, and other forms of higher order cognition. Yet the resulting landscape remains conceptually fragmented, with little consensus on what externalisation amounts to or which distinctions matter. This workshop takes these tensions as its starting point. We ask whether the content–vehicle distinction captures the most important dimensions of cognitive externalism, or whether it constrains theorising too narrowly. In particular, we examine when it is relevant to distinguish between uploading cognitive functions into external systems and offloading them onto external supports, and what follows from this distinction. We will consider practical implications for mental health, legal responsibility, therapeutic practice, and cognitive development, as well as possible links between externalisation and the degradation or reorganisation of cognitive capacities at both individual and population levels. Finally, we will assess the role of emerging technologies, especially AI-driven systems, in reshaping human cognitive practices and the distribution of cognitive labour.

SPEAKERS

John Sutton

-

John Sutton is Leverhulme International Professor and Director of the Centre for the Sciences of Place and Memory at the University of Stirling in Scotland. He is a cognitive philosopher specializing in memory, skill, and history, with recent work addressing place and wayfinding. His recent papers deploy theory and methods from distributed cognition to address dreams, martial arts, film editing, joint expertise, cognitive change in the Neolithic, improvisation, and aging together.

-

Externalism did not begin with ‘The Extended Mind’ and Being There; and, if it is true, it has (contra Block) always been true, for we are natural-born cyborgs. But the distributed cognitive and affective ecosystems of 2026 do differ dramatically from those of the mid-1990s: the interaction dynamics of coordination and combination among heterogeneous socio-technical components have shifted significantly over these 30 years, as they always tend to do. I offer one partial and prejudiced approach to the varieties of externalism on offer today, with a dual focus on time and place, on remembering and on spatial cognition and navigation. Themes include: how even third-wave anti-individualists can and should theorize individuals; vulnerability and bad scaffolding; relations between externalism and indigenous psychologies; and the centrality of skill and expertise in understanding digital technologies and AI usage.

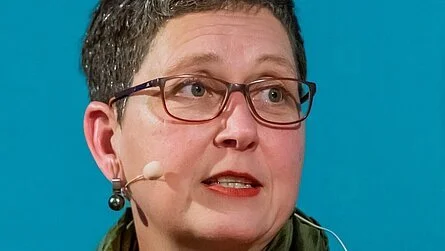

Mahault Albarracin

-

Mahault Albarracin is a researcher in cognitive computing and computational cognitive science working at the intersection of artificial intelligence, social cognition, and philosophy of mind. Her research develops formal models of agency, social coordination, and collective intelligence grounded in active inference and Bayesian approaches to belief dynamics.

She is an Associate Researcher with the Laboratoire d’Analyse Cognitive de l’Information (LANCI) at Université du Québec à Montréal and a Visiting Researcher in Social Robotics at Sheffield Hallam University. Her work combines formal modelling, simulation, and embodied robotics to investigate theory of mind, empathy, epistemic alignment, and the dynamics of shared intentionality in artificial and biological systems.

Dr. Albarracin’s broader interests include the epistemology of machine intelligence, the structure of social norms and scripts, and the role of predictive processing frameworks in explaining affective and interpersonal phenomena. She regularly lectures on artificial intelligence, cognition, and society, and her publications span computational modelling, philosophy of cognitive science, and social systems theory.

-

Mahault Albarracín’s program offers unusually direct conceptual and computational bridges between (i) scripts as socially shared normative patterns, (ii) affordance landscapes as action possibilities structured by cultural practices, and (iii) active inference as a modelling framework in which action and perception are forms of inference over a generative model of the world. Her work formalises scripts as both internal schemata and external social orders (dual-aspect scripts), enabling a principled account of “material minds” in which environments carry actionable norms rather than merely “context.” Norms become externalised into material environments via social activity, and are “brought about” in ongoing engagement rather than located strictly inside agents or strictly “out there.”

The key design opportunity for HCI/robotics is to treat artefacts and spaces as normative interfaces: they do not only present physical constraints, but also cue deontic expectations (“what one should do here”). This suggests a concrete robotics agenda: build robots that can (1) perceive artefacts and spatial configurations as deontic cues, (2) infer which social script is currently in play, and (3) choose actions that are simultaneously task-effective and norm-compliant, while remaining sensitive to pluralism, ambiguity, and contestability of norms. This aligns naturally with active-inference notions of deontic value (policy priors elicited by cues), which already formalise how observable cues can steer agent policy selection toward culturally expected behaviour.

A necessary second half of the story, especially salient for “material minds”, is the inverse direction: robots are themselves artefacts that can reshape human routines, attention, and social meaning. Longitudinal and ethnographic studies of domestic robots (notably robot vacuums) show households actively reorganising cleaning practices, spatial arrangements, and affective relationships around the device. This motivates a “reflexive externalism”: robots should be designed not only to read scripts but also to publish scripts responsibly, via transparent cues, user-contestable norms, and careful limits on persuasive or manipulative influence.

Miriam Haidle

-

Miriam Haidle is a paleaeoanthropologist and Palaeolithic archaeologist. She teaches at the University of Tübingen and held short-term lectureships in Cambodia for several years. Since 2008, she

has been the scientific coordinator of the research centre ‘The Role

of Culture in Early Expansions of Humans’ (ROCEEH) of the Heidelberg Academy of Sciences and Humanities. Her research focuses on cultural and cognitive evolution. She developed the method of cognigrams for the systematic comparative analysis of tool behaviour. Currently, she is working on how archaeological finds can provide insights into prehistoric communities of practice. -

When we think of groups spanning multiple generations rather than individual people, when we think of more than 3 million years of hominin cultural becoming rather than modern humans, we must refer to externalism to understand how people think and learn. From a paleoanthropological perspective, this talk examines whether the recognition of a 4E mind (embodied, enacted, embedded, extended) is sufficient to describe processes of cultural transmission and innovation. Using examples of tool behaviour in animals and from human evolution, situatedness, distribution, and development in three dimensions are discussed as additional external parameters of the mind.

Joel Krueger

-

Joel Krueger is Associate Professor in Philosophy at the University of Exeter. He works on issues in phenomenology, philosophy of mind, and cognitive science. This includes topics related to embodied cognition, emotions, social cognition, psychopathology, loneliness, and human-AI interactions. He also has interests in Asian and comparative philosophy, philosophy of music, and pragmatism.

-

There is increasing interest in exploring how AI-powered assistive technologies and ambient smart environments can promote independent living and wellbeing in dementia care. This is a promising development. However, I argue that emerging discussions will benefit from a subtle reframing. Current discussions tend to adopt a “functional support” framework prioritising surveillance, support, and safety. These are important objectives. But a more nuanced approach is needed, one recognizing the need to explore how this tech might also cultivate what I term our “experiential autonomy”: our capacity for aesthetic creativity, play and improvisation, and desire for meaningful risk-taking. After some background and scene setting, I consider this distinction and how it applies to AI-supported dementia care. I conclude by looking at what this means for crafting non-dominating, person-centred intervention strategies.

Robert William Clowes

-

Robert W Clowes is a researcher with the Lisbon Mind Cognition and Knowledge Group and the Institute for Philosophy II, Ruhr University Bochum. He works at the intersection of philosophy of mind, philosophy of technology and cognitive science. He received his PhD at the University of Sussex where he also taught for many years. He is principal investigator on two projects, one on Companion Generative AI (funded by the IBM Tech Ethics Lab, Notre Dame University, US) and another on Cognitive Flourishing in the Context of GenAI (funded by FCSH, Nova Lisbon). He recently edited a special issue on the Mind-Technology Problem for the journal Social Epistemology. Currently he is completing a book on the Cognitive Ecology of Generative AI.

-

With the advent of Generative AI we have produced not just a new technology but a new kind of technological background to our minds. This background poses deep challenges to what we think minds are and ultimately poses questions about the kinds of beings we are, and who we might become.

My talk starts from saying something about Large Language Models (LLMs) and how we have tended to mis-imagine them based on the history of AI. I see them working on very different principles to the AI paradigms of the past, namely GOFAI reasoning machines or Embodied Situated Robots. They challenge many ideas arising from both of these paradigms about what intelligent machines might be and by extension what human cognition is. I’ll argued LLMs are best seen as constrained confabulators: systems that invent dialogue and narrative in a coherent conceptual space. They even provide models for some of our own deep cognitive abilities echoing some earlier ideas about human narrative intelligence (Bruner 1990, Fisher 1984), and the role of confabulation in our mental lives (Dennett 1992, Wegner 2002).

The confabulatory abilities LLMs possess pose theoretical challenges for how we should think of them and ourselves. But they also pose challenges at more practical level. Especially as we turn these systems on ourselves through various “self-interpretational” practices, we must consider that we may be reshaping and reconstructing our own mental lives in the process. Having made this leap my talk will attempt to sketch the outline of the new cognitive background we now inhabit including what it might mean for the future of our minds.

Might these machines be viewed as systems that, under the right conditions, can extend our minds? On the face of it these machines easily meet the trust and glue criteria both ordered in the original Extended Mind paper (Clark and Chalmers 1998) and even meet the tighter criteria of entrenchment and individualization suggested by Sterelny (2010). And yet because of their cognitive profile, dialogically fluent machines with deep reflective opacity (Andrada, Clowes, and Smart 2022) they might better be seen as doppelganger systems rather than self-extenders (Clowes 2020).

I will argue that there is no straightforward non normative solution to the challenges LMMs pose to mental ontology. Ultimately our outlook on the future of human mental life in the time of this imminent moment of the mind-technology problem will be deeply informed by our views on what mental states, but these are going through an unprecedented moment of change (Clowes, Gärtner, and Hipólito 2021, Clowes, Gaertner, and Theiner 2026).

Lucy Osler

-

Lucy Osler is a philosophy lecturer at the University of Exeter. Her research synthesises insights from phenomenology and 4E cognition to examine the social and affective dimensions of digitally mediated life. She is currently particularly interested in human experiences of and relationships with AI companions.

-

In this talk I explore the possibility of using AI chatbots to think with and about oneself. Where Marya Schechtman's recent paper on this foregrounds questions of self-knowledge, I am interested in the ambiguous phenomenology of the experience itself. Drawing on 4E cognition frameworks, I argue that when our cognition (and possibly self) extends into our environment, this can offer distinctive forms of self-engagement. Notebooks and journals offer a relatively simple example of this, whereas AI introduces dynamic and interactive extensions that respond and adapts. In particular, I consider what kind of self-dialogue interacting with AI might facilitate and whether it can scaffold the self-dialogue Arendt deems central to solitude.